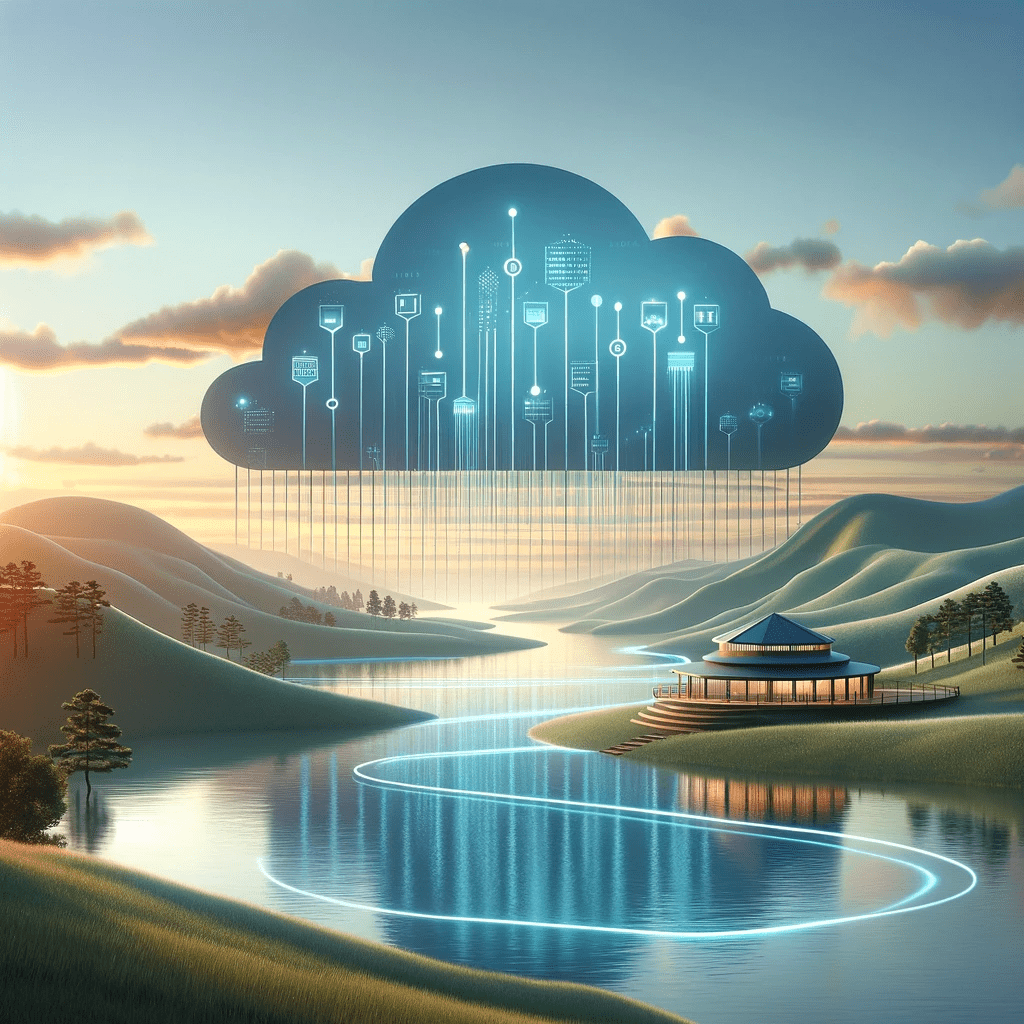

The Modern Data Stack with dbt Framework In today’s data-driven world, businesses rely on accurate and timely insights to make informed decisions and gain a competitive edge. However, the path from raw data to actionable insights can be challenging, requiring a robust data platform with automated transformation built-in to the pipeline, underpinned by data quality and security best practices. This is where dbt (data build tool) steps in, revolutionising the way data teams build scalable and reliable data pipelines to facilitate seamless deployments across multi-cloud environments. What is a Modern Data Stack The term modern data stack (MDS) refers to a set of technologies and tools that are commonly used together to enable organisations to collect, store, process, analyse, and visualise data in a modern and scalable fashion across cloud-based data platforms. The following diagram illustrates a sample set of tools & technologies that may exist within a typical modern data stack: The modern data stack has included dbt as a core part of the transformation layer. What is dbt (data build tool)? dbt (i.e. data build tool) is an open-source data transformation & modelling tool to build, test and maintain data infrastructures for organisations. The tool was built with the intention of providing a standardised approach to data transformations using simple SQL queries and is also extendible to developing models using Python. Advantages of dbt It offers several advantages for data engineers, analysts, and data teams. Key advantages include: Overall, dbt offers a powerful and flexible framework for data transformation and modeling, enabling data teams to streamline their workflows, improve code quality, and maintain scalable and reliable data pipelines in their data warehouses across multi-cloud environments. Data Quality Checkpoints Data Quality is an issue that involves a lot of components. There are lots of nuances, organisational bottlenecks, silos, and endless other reasons that make it a very challenging problem. Fortunately, dbt has a feature called dbt-checkpoint that can solve most of the issues. With dbt-checkpoint, data teams are enabled to: Data Profiling with PipeRider Data reliability just got even more reliable with better dbt integration, data assertion recommendations, and reporting enhancements. PipeRider is an open-source data reliability toolkit that connects to existing dbt-based data pipelines and provides data profiling, data quality assertions, convenient HTML reports, and integration with popular data warehouses. You can now initialise PipeRider inside your dbt project, this brings PipeRider’s profiling, assertions, and reporting features to your dbt models. PipeRider will automatically detect your dbt project settings and treat your dbt models as if they were part of your PipeRider project. This includes – How can TL Consulting help? dbt (Data Build Tool) has revolutionised data transformation and modeling with its code-driven approach, modular SQL-based models, and focus on data quality. It enables data teams to efficiently build scalable pipelines, express complex transformations, and ensure data consistency through built-in testing. By embracing dbt, organisations can unleash the full potential of their data, make informed decisions, and gain a competitive edge in the data-driven landscape. TL Consulting have strong experience implementing dbt as part of the modern data stack. We provide advisory and transformation services in the data analytics & engineering domain and can help your business design and implement production-ready data platforms across multi-cloud environments to align with your business needs and transformation goals.